AI-Powered visual search tools are one of the most exciting yet challenging tools in the industry at the moment.

Visual search tools are still being developed but have seen a lot of progress in recent years.

Visual search is one of the most complex and exciting innovations in the world right now. Our ability to identify and recognize an image takes about 13 milliseconds or less, meaning that creating the technology to comprehend the same things can be very challenging. Visual search tool functions by leveraging a variety of technology, psychological insight, and a few neuroscientific concepts, making it very exciting yet challenging to master and grasp.

Visual search is essentially recognizing certain targets within a busy visual environment. Though, we practice this just about every day, this task is harder to imitate for search engine tools such as Google, Pinterest, and Bing. When a search engine tool performs an image search, it would take the text based query and try to provide the image that best represents that text.

To conduct a visual search, these tools perform a more complicated process. Search engines would first take the image as its query instead of the text. It would then perform various tests through deep neural networks to try to identify the targets including a target distractor similarity color search, a form search, and a set-size color search. Through these tests, search engines will pull the images and visuals that are the closest to resembling the targeted image. Even with today’s technologies, search engines still struggle to provide us with the results that we’re looking for. A large reason why this is the case is due to our own lack of understanding of how we process image recognition. Nonetheless, this field has seen a lot of progress and has improved drastically in the last few years. Here are some of the ways how visual search technology is currently being applied.

Google Lens

Two of the most anticipated features of the new Google Pixel are the built in Google Lens and its Google Assistant. With this new feature, users would now be able to quickly get help with what they see in front of them. Some of the primary ways that users can leverage Google Assistant and Lens are:

- Text: Saving information from posters, following URLs, recording phone numbers and email addresses from business cards, navigate to addresses.

- Barcodes: Quickly find products by barcode or QR scans.

- Art books and movies: Learn more about a film based off of trailers, reviews, or posters. Find book synopsis and reviews from their covers. Pull up painting or artist information from a quick click.

- Landmarks: With a quick snap of famous landmarks, you’ll be able to pull up city information in seconds.

Visual search technology has drastically helped this technology improve and develop into a useful tool.

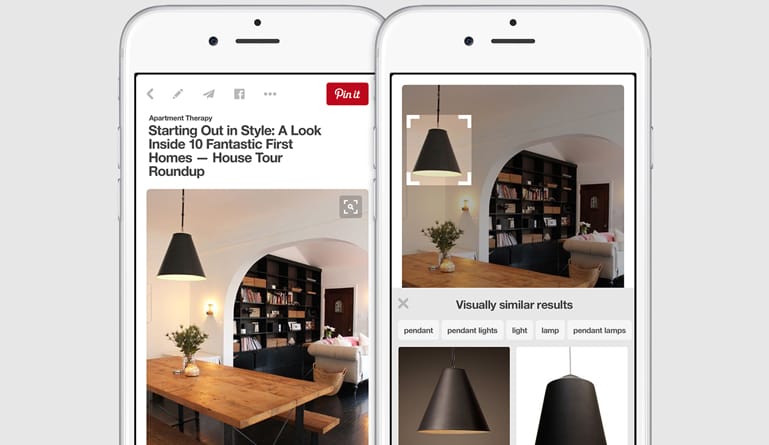

Pinterest Lens

This year, Pinterest ambitiously strived to change the world by releasing their own visual search tool that aimed to not only identify items and objects but also shows the user how it can be a part of their life. This personalization aspect has a lot of potential to changing the customer experience. With Lens Your Look, users can take pictures of clothing articles to find fashion inspirations for outfits. For example, users can take a picture of a jacket from their closet and Pinterest can offer recommendations to what would look good with that jacket based on its fashion database.

Another tool that fashion enthusiasts are excited for is Shop the Look tool. With this tool, users can take pictures of their friends’ outfits to learn where they can purchase them as well. By partnering with ShopStyle, Pinterest is able to draw information from more than 5 million products across 25,000 brands, with more being added each day.